MCP Connectors

MCP connectors are tools to connect to MCP servers. They provide full MCP protocol support including tools, resources, resource templates, and prompts.

You can find a list of pre-build MCP servers from this repository

We will add examples with pre-build entities from our repository

Supported MCP capabilities

MCP connectors support the full MCP protocol:

| Capability | Description |

|---|---|

| Tools | Discover and call tools exposed by the MCP server |

| Resources | List and read resources (files, data, documents, etc.) from the MCP server. Resources are returned with uri, name, description, and mimeType. Contents can be text or binary (blob). |

| Resource Templates | List parameterized resource templates from the MCP server. Templates are returned with uriTemplate, name, description, and mimeType. |

| Prompts | List and retrieve prompt templates from the MCP server. Prompts include name, description, and their arguments (each with name, description, and required). Getting a prompt returns its description and messages (each with role and content). |

All capabilities support filtering via include/exclude patterns and advanced rules. See the Filtering and Advanced rules sections below.

Configuration

Here is the MCP connector configuration :

{

"_loc": {

"tenant": "default",

"teams": [

"default"

]

},

"id": "mcp-connector_23d9b4db-1593-426e-b205-b5f331f78f1d",

"name": "github-mcp",

"description": "github-mcp",

"metadata": {},

"tags": [],

"pool": {

"size": 1

},

"transport": {

"kind": "stdio",

"options": {

"command": "npx",

"args": [

"-y",

"@modelcontextprotocol/server-github"

],

"env": {

"GITHUB_PERSONAL_ACCESS_TOKEN": "${vault://local/mcp-github-token}"

}

}

},

"strict": false,

"kind": "ai-gateway.extensions.cloud-apim.com/McpConnector"

}

Here is the LLM Provider configuration :

{

"_loc": {

"tenant": "default",

"teams": [

"default"

]

},

"id": "provider_480ec0b7-bc9e-487f-8376-b9b8111bfe5e",

"name": "OpenAI provider",

"description": "An OpenAI LLM api provider",

"metadata": {},

"tags": [],

"provider": "openai",

"connection": {

"base_url": "https://api.openai.com/v1",

"token": "${vault://local/openai-token}",

"timeout": 30000

},

"options": {

"model": "gpt-4o-mini",

"frequency_penalty": null,

"logit_bias": null,

"logprobs": null,

"top_logprobs": null,

"max_tokens": null,

"n": 1,

"presence_penalty": null,

"response_format": null,

"seed": null,

"stop": null,

"stream": false,

"temperature": 1,

"top_p": 1,

"tools": null,

"tool_choice": null,

"user": null,

"wasm_tools": [],

"mcp_connectors": [

"mcp-connector_23d9b4db-1593-426e-b205-b5f331f78f1d"

],

"allow_config_override": true

},

"provider_fallback": null,

"context": {

"default": null,

"contexts": []

},

"models": {

"include": [],

"exclude": []

},

"guardrails": [],

"guardrails_fail_on_deny": false,

"cache": {

"strategy": "none",

"ttl": 300000,

"score": 0.8

},

"kind": "ai-gateway.extensions.cloud-apim.com/Provider"

}

Here is the route configuration :

{

"_loc": {

"tenant": "default",

"teams": [

"default"

]

},

"id": "route_79c1d15ae-5e64-482c-9a29-4b7dcad36089",

"name": "mcp-openai",

"description": "mcp-openai",

"tags": [],

"metadata": {},

"enabled": true,

"debug_flow": false,

"export_reporting": false,

"capture": false,

"groups": [

"default"

],

"bound_listeners": [],

"frontend": {

"domains": [

"mcp-openai.oto.tools"

],

"strip_path": true,

"exact": false,

"headers": {},

"query": {},

"methods": []

},

"backend": {

"targets": [

{

"id": "target_1",

"hostname": "request.otoroshi.io",

"port": 443,

"tls": true,

"weight": 1,

"predicate": {

"type": "AlwaysMatch"

},

"protocol": "HTTP/1.1",

"ip_address": null,

"tls_config": {

"certs": [],

"trusted_certs": [],

"enabled": false,

"loose": false,

"trust_all": false

}

}

],

"root": "/",

"rewrite": false,

"load_balancing": {

"type": "RoundRobin"

},

"client": {

"retries": 1,

"max_errors": 20,

"retry_initial_delay": 50,

"backoff_factor": 2,

"call_timeout": 30000,

"call_and_stream_timeout": 120000,

"connection_timeout": 10000,

"idle_timeout": 60000,

"global_timeout": 30000,

"sample_interval": 2000,

"proxy": {},

"custom_timeouts": [],

"cache_connection_settings": {

"enabled": false,

"queue_size": 2048

}

},

"health_check": {

"enabled": false,

"url": "",

"timeout": 5000,

"healthyStatuses": [],

"unhealthyStatuses": []

}

},

"backend_ref": null,

"plugins": [

{

"enabled": true,

"debug": false,

"plugin": "cp:otoroshi.next.plugins.OverrideHost",

"include": [],

"exclude": [],

"config": {},

"bound_listeners": [],

"plugin_index": {

"transform_request": 0

},

"nodeId": "cp:otoroshi.next.plugins.OverrideHost"

},

{

"enabled": true,

"debug": false,

"plugin": "cp:otoroshi_plugins.com.cloud.apim.otoroshi.extensions.aigateway.plugins.OpenAiCompatProxy",

"include": [],

"exclude": [],

"config": {

"refs": [

"provider_480ec0b7-bc9e-487f-8376-b9b8111bfe5e"

]

},

"bound_listeners": [],

"plugin_index": {},

"nodeId": "cp:otoroshi_plugins.com.cloud.apim.otoroshi.extensions.aigateway.plugins.OpenAiCompatProxy"

}

],

"kind": "proxy.otoroshi.io/Route"

}

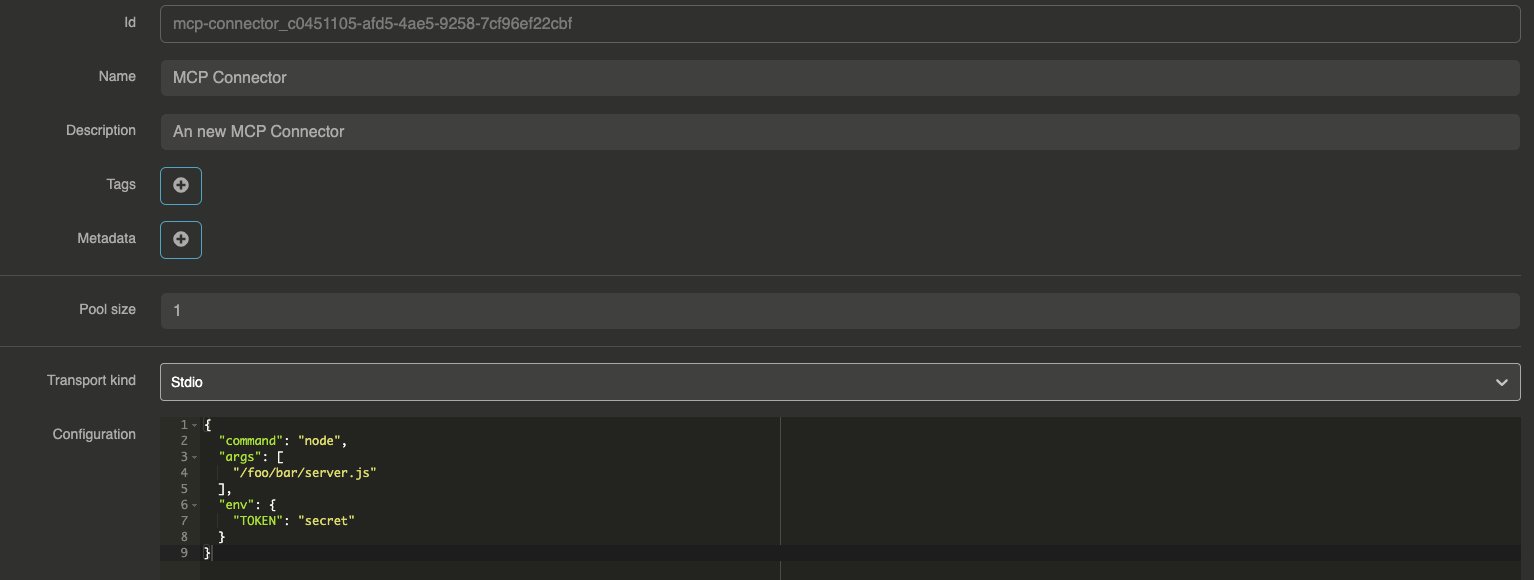

Default config

{

"command": "node",

"args": [

"/foo/bar/server.js"

],

"env": {

"TOKEN": "secret"

}

}

Example with Filesystem MCP Server

Filesystem MCP Connector configuration

{

"command": "npx",

"args": [

"-y",

"@modelcontextprotocol/server-filesystem",

"/Users/your-user/Desktop"

]

}

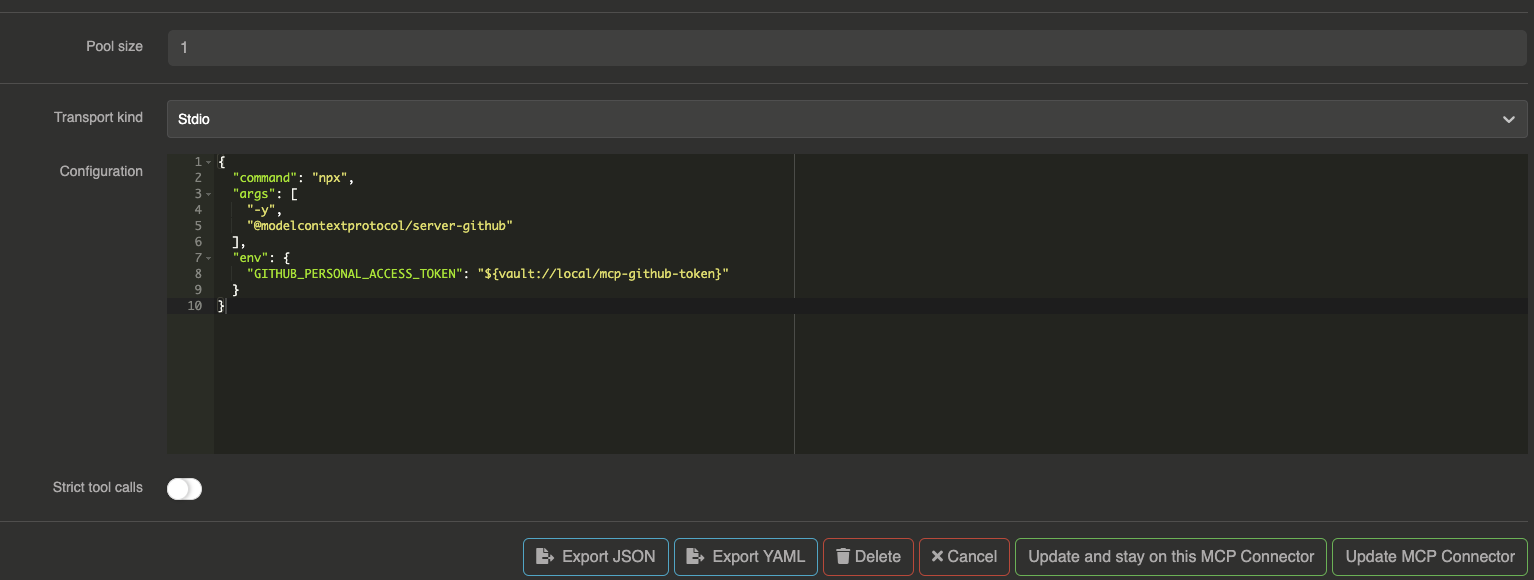

Example with Github MCP Server

To use the Github MCP server you will need to generate a Github access token.

Then, we will put the token into our local vault.

Go to your Otoroshi danger zone and go to Global Metadata section.

Right after, you can create a new KEY, VALUE pair like this example (in the Otoroshi environment) :

{

"mcp-github-token": "YOUR_GITHUB_TOKEN_HERE"

}

Github MCP Connector configuration

{

"command": "npx",

"args": [

"-y",

"@modelcontextprotocol/server-github"

],

"env": {

"GITHUB_PERSONAL_ACCESS_TOKEN": "${vault://local/mcp-github-token}"

}

}

Now you can use Github MCP server's functions to Create issues, list all issues, list pull requests and much more with your favorite LLMs !

Filtering tools, resources and prompts

MCP connectors expose tools, resources, resource templates, and prompts from the MCP server. You can filter which ones are available using include/exclude patterns. All patterns support regex matching.

Filtering tools (functions)

Use include_functions and exclude_functions to control which tools are exposed:

{

"id": "mcp-connector_xxxxx",

"name": "filtered-mcp",

"transport": { ... },

"include_functions": ["get_.*", "list_.*"],

"exclude_functions": ["delete_.*", "drop_.*"]

}

Filtering resources

Use include_resources and exclude_resources to control which resources are exposed:

{

"include_resources": ["users", "projects"],

"exclude_resources": ["admin_.*"]

}

Filtering resource templates

Use include_resource_templates and exclude_resource_templates to filter resource templates by name, and include_resource_template_uris / exclude_resource_template_uris to filter by URI template:

{

"include_resource_templates": ["user_template"],

"exclude_resource_templates": [],

"include_resource_template_uris": ["file://.*"],

"exclude_resource_template_uris": ["file:///etc/.*"]

}

Filtering prompts

Use include_prompts and exclude_prompts to control which prompts are exposed:

{

"include_prompts": ["summarize", "translate"],

"exclude_prompts": ["debug_.*"]

}

Complete filtering example

{

"id": "mcp-connector_xxxxx",

"name": "filtered-github-mcp",

"transport": {

"kind": "stdio",

"options": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-github"],

"env": {

"GITHUB_PERSONAL_ACCESS_TOKEN": "${vault://local/mcp-github-token}"

}

}

},

"include_functions": ["list_.*", "get_.*", "search_.*"],

"exclude_functions": ["delete_.*"],

"include_resources": [],

"exclude_resources": [],

"include_prompts": [],

"exclude_prompts": []

}

Advanced rules

Beyond simple include/exclude patterns, MCP connectors support advanced rules based on JsonPath validation. Rules allow you to control access to tools, resources, resource templates, and prompts based on the request context (consumer identity, apikey metadata, request details, etc.).

Rules are organized by category and can target specific items by name or all items using the * wildcard.

Rule structure

{

"allow_rules": {

"tool_rules": {

"sensitive_tool": [

{ "path": "$.apikey.metadata.role", "value": "admin" }

],

"*": [

{ "path": "$.apikey.enabled", "value": true }

]

},

"prompt_rules": { ... },

"resource_rules": { ... },

"resource_templates_rules": { ... }

},

"disallow_rules": {

"tool_rules": {

"dangerous_tool": [

{ "path": "$.apikey.metadata.env", "value": "production" }

]

},

"prompt_rules": { ... },

"resource_rules": { ... },

"resource_templates_rules": { ... }

}

}

How rules work

- Disallow rules are evaluated first: if a disallow rule matches, the item is blocked

- Allow rules are evaluated next: if an allow rule is defined for the item, it must match for the item to be accessible

- If no rule is defined for an item, it is accessible by default

Rules use Otoroshi's JsonPathValidator format to validate against the request context, which includes consumer identity, apikey metadata, request headers, and more.

Example: restrict tools by consumer role

{

"allow_rules": {

"tool_rules": {

"create_repository": [

{ "path": "$.apikey.metadata.github_role", "value": "maintainer" }

],

"delete_repository": [

{ "path": "$.apikey.metadata.github_role", "value": "admin" }

]

}

},

"disallow_rules": {

"tool_rules": {

"delete_repository": [

{ "path": "$.apikey.metadata.env", "value": "production" }

]

}

}

}

In this example:

create_repositoryis only available to consumers withgithub_role: maintainerin their apikey metadatadelete_repositoryis only available to consumers withgithub_role: admin, and is always blocked in production environments

Authentication passthrough

MCP connectors can forward the consumer's authentication token to the MCP server. This is useful when the MCP server needs to authenticate the consumer or apply its own authorization logic.

In the transport headers configuration, use the {input_token} placeholder to inject the consumer's Bearer token:

{

"transport": {

"kind": "sse",

"options": {

"url": "https://my-mcp-server.example.com/sse",

"headers": {

"Authorization": "Bearer {input_token}"

}

}

}

}

The {input_token} placeholder is replaced at runtime with the Bearer token from the incoming request's Authorization header. This works with SSE, WebSocket, and Streamable HTTP transports.

Postgres MCP Server

User prompt to the LLM

{

"messages": [

{

"role": "user",

"content": "Could you list me all the users from the 'users' table please ?"

}

]

}

Curl request

curl --request POST \

--url http://mcp-pg-openai.oto.tools:8080/ \

--header 'content-type: application/json' \

--data '{

"messages": [

{

"role": "user",

"content": "Could you list me all the users from the '\''users'\'' table please ?"

}

]

}'

LLM response :

{

"id": "chatcmpl-3WFxjlu6MWpvw4ZaOGtyc4OqvFycGDP4",

"object": "chat.completion",

"created": 1743155603,

"model": "gpt-4o-mini",

"system_fingerprint": "fp-RvGvrs3QlRhPqmXM7NqGd61qeOPudtEZ",

"choices": [

{

"index": 0,

"message": {

"role": "assistant",

"content": "Here are all the users from the 'users' table:\n\n1. **Alice Johnson**\n - Email: alice@example.com\n - Age: 30\n - Address: 123 Main St, Springfield\n - Phone: 123-456-7890\n - Created At: 2025-03-28T09:47:34.121Z\n\n2. **Bob Smith**\n - Email: bob@example.com\n - Age: 25\n - Address: 456 Elm St, Shelbyville\n - Phone: 987-654-3210\n - Created At: 2025-03-28T09:47:34.121Z\n\n3. **Charlie Brown**\n - Email: charlie@example.com\n - Age: 35\n - Address: 789 Oak St, Capital City\n - Phone: 555-123-4567\n - Created At: 2025-03-28T09:47:34.121Z"

},

"logprobs": null,

"finish_reason": "stop"

}

],

"usage": {

"prompt_tokens": 342,

"completion_tokens": 214,

"total_tokens": 556,

"completion_tokens_details": {

"reasoning_tokens": 0

}

}

}

Enabling no personal information guardrail

Using the No personal information guardrail will block all the requests mentioning personal information from the prompt to the LLM or from the response.

Warning : To enable the guardrail, you will need to create another provider that will be used to apply the guardrail rule :

Response from the LLM :

{

"id": "chatcmpl-5iTwZroMP6B3qKEWqfHLbvcVsQhucOHE",

"object": "chat.completion",

"created": 1743177176,

"model": "gpt-4o-mini",

"system_fingerprint": "fp-1HS2LIBA0cK0N8rBVi30YVUbayejNDAo",

"choices": [

{

"index": 0,

"message": {

"role": "assistant",

"content": "This message has been blocked by the 'personal-information' guardrail !"

},

"logprobs": null,

"finish_reason": "stop"

}

],

"usage": {

"prompt_tokens": 0,

"completion_tokens": 0,

"total_tokens": 0,

"completion_tokens_details": {

"reasoning_tokens": 0

}

}

}

Full route configuration with guardrail :

{

"_loc": {

"tenant": "default",

"teams": [

"default"

]

},

"id": "provider_6bfe8012-1ccb-4884-bef2-00e307862e7e",

"name": "OpenAI provider + Postgres MCP",

"description": "OpenAI provider + Postgres MCP",

"metadata": {},

"tags": [],

"provider": "openai",

"connection": {

"base_url": "https://api.openai.com/v1",

"token": "${vault://local/openai-token}",

"timeout": 30000

},

"options": {

"model": "gpt-4o-mini",

"frequency_penalty": null,

"logit_bias": null,

"logprobs": null,

"top_logprobs": null,

"max_tokens": null,

"n": 1,

"presence_penalty": null,

"response_format": null,

"seed": null,

"stop": null,

"stream": false,

"temperature": 1,

"top_p": 1,

"tools": null,

"tool_choice": null,

"user": null,

"wasm_tools": [],

"mcp_connectors": [

"mcp-connector_c0451105-afd5-4ae5-9258-7cf96ef22cbf"

],

"allow_config_override": true

},

"provider_fallback": null,

"context": {

"default": null,

"contexts": []

},

"models": {

"include": [],

"exclude": []

},

"guardrails": [

{

"enabled": true,

"before": true,

"after": true,

"id": "pif",

"config": {

"provider": "provider_fc0b30ef-35e2-4a5d-8286-8b0e24f0b028",

"pif_items": [

"EMAIL_ADDRESS",

"PHONE_NUMBER",

"LOCATION_ADDRESS",

"NAME",

"IP_ADDRESS",

"CREDIT_CARD",

"SSN"

]

}

}

],

"guardrails_fail_on_deny": false,

"cache": {

"strategy": "none",

"ttl": 300000,

"score": 0.8

},

"kind": "ai-gateway.extensions.cloud-apim.com/Provider"

}