Expose your LLM Provider

Now that your provider is fully set up, you can expose it to your organization. The idea is to do it through an Otoroshi route with a plugin of type backend that will handle passing incoming requests to the actual LLM provider.

OpenAI compatible plugins

We provide a set of plugins capable of exposing any supported LLM provider through a compatible OpenAI API. This is the standard way of doing LLM exposition.

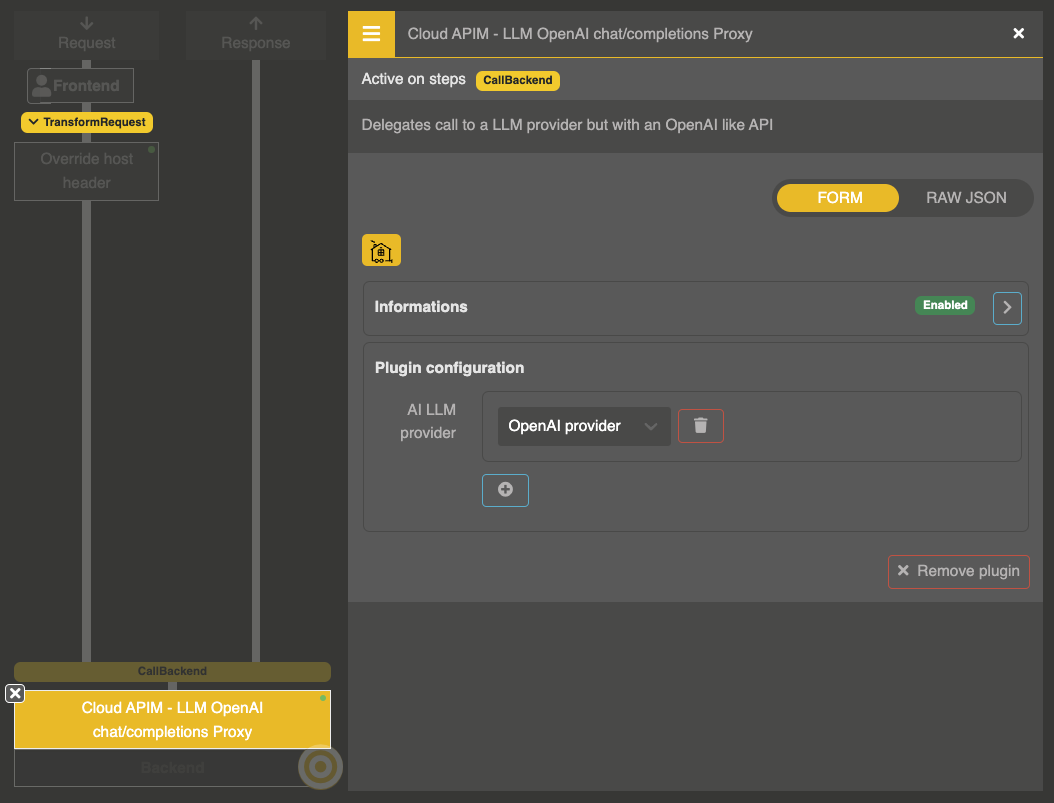

Chat completions proxy

Plugin: Cloud APIM - LLM OpenAI chat/completions Proxy

cp:otoroshi_plugins.com.cloud.apim.otoroshi.extensions.aigateway.plugins.OpenAiCompatProxy

This plugin is compatible with the OpenAI chat completion API, including streaming.

Configuration

{

"refs": ["provider_entity_id_1", "provider_entity_id_2"]

}

| Parameter | Type | Description |

|---|---|---|

refs | array | List of LLM Provider entity IDs |

Usage

curl https://my-own-llm-endpoint.on.otoroshi/v1/chat/completions \

-H "Content-Type: application/json" \

-H "Authorization: Bearer $OTOROSHI_API_KEY" \

-d '{

"model": "gpt-4o",

"messages": [

{

"role": "user",

"content": "Hello how are you!"

}

]

}'

Response:

{

"id": "chatcmpl-B9MBs8CjcvOU2jLn4n570S5qMJKcT",

"object": "chat.completion",

"created": 1741569952,

"model": "gpt-4o",

"choices": [

{

"index": 0,

"message": {

"role": "assistant",

"content": "Hello! How can I assist you today?"

},

"finish_reason": "stop"

}

],

"usage": {

"prompt_tokens": 19,

"completion_tokens": 10,

"total_tokens": 29

}

}

Model routing

When multiple providers are configured in refs, you can target a specific provider using the model field:

- Slash syntax:

providerName/modelName(e.g.,my_openai_provider/gpt-4o) - Hash syntax:

providerId###modelName(e.g.,provider_xxx###gpt-4o)

If no provider prefix is specified, the first configured ref is used.

Text completions proxy

Plugin: Cloud APIM - LLM OpenAI completions Proxy

cp:otoroshi_plugins.com.cloud.apim.otoroshi.extensions.aigateway.plugins.OpenAiCompletionProxy

This plugin is compatible with the OpenAI completions API, including streaming. It converts the legacy completions format (prompt field) to the internal chat format.

Configuration

{

"refs": ["provider_entity_id"]

}

| Parameter | Type | Description |

|---|---|---|

refs | array | List of LLM Provider entity IDs |

Usage

curl https://my-own-llm-endpoint.on.otoroshi/v1/completions \

-H "Content-Type: application/json" \

-H "Authorization: Bearer $OTOROSHI_API_KEY" \

-d '{

"model": "gpt-4o",

"prompt": "Once upon a time",

"max_tokens": 100

}'

Supports echo (include the prompt in the response) and suffix parameters.

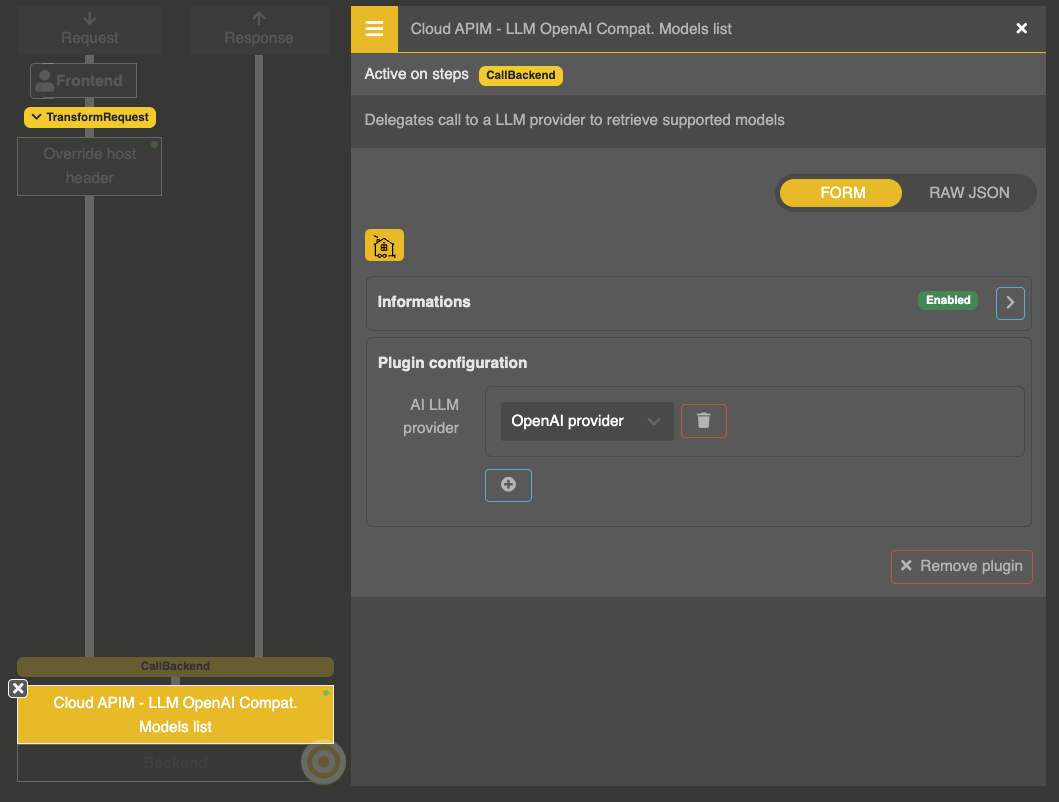

Models list

Plugin: Cloud APIM - LLM OpenAI Compat. Models list

cp:otoroshi_plugins.com.cloud.apim.otoroshi.extensions.aigateway.plugins.OpenAiCompatModels

Exposes the provider models list compatible with the OpenAI models API.

Configuration

{

"refs": ["provider_entity_id"]

}

| Parameter | Type | Description |

|---|---|---|

refs | array | List of LLM Provider entity IDs |

Usage

curl https://my-own-llm-endpoint.on.otoroshi/v1/models \

-H "Authorization: Bearer $OTOROSHI_API_KEY"

Response:

{

"object": "list",

"data": [

{

"id": "o1-mini",

"object": "model",

"created": 1686935002,

"owned_by": "openai"

},

{

"id": "gpt-4",

"object": "model",

"created": 1686935002,

"owned_by": "openai"

}

]

}

When multiple providers are configured, model IDs are prefixed with the provider slug name (e.g., my_openai/gpt-4o). Use ?raw=true to get the raw model identifiers without prefixes.

Models list with provider info

Plugin: Cloud APIM - LLM OpenAI Compat. Provider with Models list

cp:otoroshi_plugins.com.cloud.apim.otoroshi.extensions.aigateway.plugins.OpenAiCompatProvidersWithModels

Similar to the models list, but enriches each model entry with provider metadata:

{

"object": "list",

"data": [

{

"id": "my_openai/gpt-4o",

"combined_id": "my_openai/gpt-4o",

"simple_id": "gpt-4o",

"provider_id": "my_openai",

"owned_by": "OpenAI",

"owned_by_with_model": "OpenAI / gpt-4o",

"object": "model",

"created": 1686935002

}

]

}

LLM response endpoint

Plugin: Cloud APIM - LLM Response endpoint

cp:otoroshi_plugins.com.cloud.apim.otoroshi.extensions.aigateway.plugins.LlmResponseEndpoint

Provides an endpoint that returns raw LLM responses from a predefined prompt. The prompt supports Expression Language for dynamic values based on the request context.

Configuration

{

"ref": "provider_entity_id",

"prompt": "Summarize the following text: ${req.body.text}",

"prompt_ref": null,

"context_ref": null

}

| Parameter | Type | Description |

|---|---|---|

ref | string | LLM Provider entity ID |

prompt | string | Prompt text with optional Expression Language variables |

prompt_ref | string | Optional reference to a stored prompt configuration |

context_ref | string | Optional reference to a stored context configuration |

Response

{

"generations": [

{

"message": {

"role": "assistant",

"content": "..."

}

}

]

}

Anthropic compatible plugin

We also provide an Anthropic Messages API compatible proxy that allows any Anthropic API client (including Claude Code) to use any LLM provider managed by Otoroshi. See the dedicated documentation page for details.

Open Responses compatible plugin

We provide an Open Responses API compatible proxy that allows any client speaking the Open Responses specification to use any LLM provider managed by Otoroshi. See the dedicated documentation page for details.

Route example

A complete route configuration exposing a chat completions endpoint with a models list:

{

"frontend": {

"domains": ["llm.my-domain.com"]

},

"backend": {

"targets": [

{

"hostname": "request.otoroshi.io",

"port": 443,

"tls": true

}

]

},

"plugins": [

{

"enabled": true,

"plugin": "cp:otoroshi.next.plugins.OverrideHost",

"config": {}

},

{

"enabled": true,

"plugin": "cp:otoroshi_plugins.com.cloud.apim.otoroshi.extensions.aigateway.plugins.OpenAiCompatProxy",

"includes": [

"/v1/chat/completions"

],

"config": {

"refs": ["provider_openai_1"]

}

},

{

"enabled": true,

"plugin": "cp:otoroshi_plugins.com.cloud.apim.otoroshi.extensions.aigateway.plugins.OpenAiCompatModels",

"includes": [

"/v1/models"

],

"config": {

"refs": ["provider_openai_1"]

}

}

]

}